Information and Communication Technology becomes very easy especially for the students of computer background as well as it becomes a bit tufted for non-technical background students. In such a situation, in this post “PYQ of Information and Communication Technology“ we have discussed some Previous Year Questions (PYQ) of Information and Communication Technology (ICT) here with an explanation so that it becomes even easier to understand for those students who find it a bit tuff.

MOODLE is an abbreviation of

- Modular Object-Oriented Distance Learning Environment

- Modular Object-Oriented Dynamic Learning Environment

- Modular Object-Oriented Distance Legislative Environment

- Modular Object-Oriented Distance Legal Environment

Solution

Key-Points

• Moodle stands for Modular Object-Oriented Dynamic Learning Environment.

• Moodle was designed to provide educators, administrators, and learners with an open, robust, secure, and free platform to create and deliver personalized learning environments.

• Moodle is a user-friendly Learning Management System (LMS) that supports learning and training needs for a wide range of institutions and organizations across the globe.

• Today, Moodle is the most widely used Learning Management System in the world, with well over 100,000 registered implementations worldwide supporting over 150 million learners.

• Moodle’s open source project is managed by a dedicated team at Moodle HQ with a head office in Perth, Australia, and satellite offices around the world. Moodle’s modular nature and inherent flexibility make it an ideal platform for both academic and enterprise-level applications of any size.

• Moodle is a learning platform designed to provide educators, administrators, and learners with a single robust, secure and integrated system to create personalized learning environments.

• Using Moodle, you can create classes, make attendance lists, deliver learning materials, give quizzes, send feedback, and much more. There is a huge amount of flexibility and reconfigurability within the platform.

Therefore, option 2 is the correct answer.

What is bit rate?

- Number of bits stored in computer

- Number of bits per second that can be transmitted over a network

- Number of bits per hour stored in a computer over a network

- Binary digit converted to hexa decimal number

Solution

Bit rate is commonly measured in bits per second (bit/s) and often includes a prefix, such as “kilo’, ‘mega’, ‘Giga’, or ‘Tera’.

• One kilobit per second (kbit/s) is the equivalent of 1000 bits per second.

• In video streaming, bitrate refers to the number of bits that are conveyed or processed in a given unit of time.

• In most computing and digital communication environments, one byte per second (1 Bls) corresponds to 8 bits.

Hence, we may say that Bit rate is the number of bits per second that can be transmitted over a network.

Cloud computing has the following distinct characteristics :

(A) The service is hosted on the internet.

(B) It is made available by a service provider.

(C) It computes and predicts rain when the weather is cloudy.

(D) The service is fully managed by the provider

Choose the correct answer from the options given below:

- (A), (B) only

- (A), (B), (C) only

- (A), (D) only

- (A), (B), (D) only

Solution

Cloud Computing:

• Cloud computing is an emerging trend in the field of information technology, where computer-based services are delivered over the Internet or the cloud, and it is accessible to the user from anywhere using any device.

• The services comprise software, hardware (servers), databases, storage, etc.

• These resources are provided by companies called cloud service providers and usually charge on a pay-per-use basis, like the way we pay for electricity usage.

• Cloud computing is an infrastructure and software model that enables ubiquitous access to shared pools of storage, networks, servers, and applications.

• It allows for data processing to be done on a privately-owned cloud or a third-party server. This creates maximum speed and reliability. But the greatest benefit is its ease of installation, low maintenance, and scalability. This way it grows with your needs.

- We already use cloud services while storing our pictures and files as backups on the Internet or host a website on the Internet.

• Through cloud computing, a user can run a bigger application or process a large amount of data without having the required storage or processing power on their personal computer as long as they are connected to the Internet.

• Besides other numerous features, cloud computing offers cost-effective, on-demand resources. A user can avail of need-based resources from the cloud at a very reasonable cost.

• Examples Of Cloud Storage: Dropbox, Gmail, Facebook

. Examples Of Marketing Cloud Platforms: Maropost For Marketing, Hubspot, Adobe Marketing Cloud

• Examples of Cloud Computing In Education: Slide Rocket, Ratatype, Amazon Web Services

. Examples Of Cloud Computing In Healthcare: ClearDATA, Dell’s Secure Healthcare Cloud, IBM Cloud

Thus, option 4 is the correct answer.

Given below are two statements:

Statement l: External hard disk is a primary memory:

Statement II: In general, pen drives have more storage capacity than external hard disks.

In the light of the above statements, choose the correct answer from the options given below:

- Both Statement I and Statement il are true

- ✓Both Statement I and Statement il are false

- Statement is correct but Statement Il is false

- Statement is incorrect but Statement II is true

Solution

Primary memory is computer memory that is accessed directly by the CPU.

• A computer’s internal hard drive is often considered a primary storage device, while external hard drives and other external media are considered secondary storage devices.

• RAM, or random access memory, consists of one or more memory modules that temporarily store data while a computer is running

• RAM, commonly called “memory,” is considered primary storage, since it stores data that is directly accessible by the computer’s

CPU.

• In general, the external hard drive has a larger capacity comparing the flash drive.

• The main reason lies in the storage media.

• As we all know, the flash drive only uses flash memory as its storage medium, while the external hard drive has more options.

• The pen drive is a type of flash drive named for its small pen-like appearance. Pen drives can be inserted directly into a USB port

without a USB cord.

• Pen drives are faster, smaller, more durable, and have more capacity compared to floppy disks and CDs. external hard drives

are better than flash drives storage capacity.

Hence, we may say that the External hard disk is a secondary memory, pen drives have less storage capacity than external hard disks.

Application of ICT in research is relevant in which of the following stages?

i) Survey of related studies

ii) Data collection in the field

iii) Data Analysis

iv) Writing the thesis

v) Indexing the references

Choose the most appropriate option from those given below:

- ii) iv) and v)

- i), iii) and v)

- i), ii) and iv)

- ii), iii) and iv)

Solution

Information Technology (IT) and Information and Communication Technology (ICT) are very often interchangeably used in the

context of modern technology infrastructure. ICT is a broad and comprehensive term, which comprises information technology and

communication technology. Information technology includes radio, television, computer and the Internet, teleconferencing, and mobile.

According to the American sociologist Earl Robert Babbie, “Research is a systematic inquiry to describe, explain, predict, and control the observed phenomenon. Research involves inductive and deductive methods.”

1. Over the past decade, there has been a significant increase in the scope of ICT to be employed in the fields of education and research.

2. Information and Communication Technology has done so much to remove from the researcher the constraints of speed, cost, and distance.

3. On the whole, information and communication technology has led to improvements in research.

4. New avenues for scientific exploration have opened. The amount of data that can be analyzed has been expanded, as has the complexity of analyses. And researchers can collaborate more widely and efficiently.

5. Though the use of ICT can be employed in every stage of the research process, the scope for employing ICT support is relatively more in the stages of Survey, Data Analysis, and Indexing The References.

6. Surveying the sample audience can be done through the use of ICT. Google forms, emails, social networking sites, etc. can be used to obtain information from our sample audience. Surveying through the help of ICT saves time, cost, and helps us reach a comparatively larger sample audience.

7. Analyzing data with computers is among the most widespread uses of information and communication technology in research.

8. Computer hardware for these purposes comes in all sizes, ranging from personal computers to microprocessors dedicated to specific instrumentational tasks, large mainframe computers serving a university campus or research facility, and supercomputers.

Computer software ranges from general-purpose programs that compute numeric functions or conduct statistical analysis to specialized applications of all sorts.

9. A citation index is an ordered list of cited articles along with a list of citing articles. The cited article is identified as the reference and the citing article as the source. The index is prepared to utilize the association of ideas existing between the cited and the citing articles, as the fact is that whenever a recent paper cites a previous paper there always exists a relation of ideas, between the two papers. Citation indexing provides subject access to bibliographic records in an indirect but powerful manner.

10. ICT helps to identify appropriate information sources so that their contribution can be acknowledged and plagiarism can be avoided.

Thus, option 2 is the correct answer.

Which of the following statements is/are true?

A. Fibre optic cables are wooden fibres to provide high quality transmission.

B. Wireless communication provides anytime, anywhere connection to both computers and telephones.

C. Mobile phones are capable of providing voice communication and also digital messaging service.

Choose the correct answer from the options given below

- A and C only

- B and C only

- A and B only

- C only

Solution

Key-Points

A. Statement: Fibre optic cables are wooden fibers to provide high-quality transmission-False

Explanation:

• An optical fiber is a flexible, transparent fiber made by drawing glass (silica) or plastic to a diameter slightly thicker than that of a human hair

• Optical fibers are used most often as a means to transmit light[a] between the two ends of the fiber and find wide usage in fiber- optic communications, where they permit transmission over longer distances and at higher bandwidths (data transfer rates) than electrical cables.

B. Statement: Wireless communication provides anytime, anywhere connection to both computers and telephones- True

Explanation:

• Wireless communications allow wireless networking between desktop computers, laptops, tablet computers, cell phones, and other related devices.

• Wireless networks are cheaper to install and maintain.

• Data is transmitted faster and at a high speed.

• Reduced maintenance and installation cost compared to other forms of networks.

• A wireless network can be accessed from anywhere, anytime.

. Working professionals these days can access the Internet anywhere and anytime without carrying cables or wires.

• This also permits professionals to complete their work from remote locations.

C. Statement: Mobile phones are capable of providing voice communication and also digital messaging service – True

Explanation:

• A mobile phone, cellular phone, cell phone, cellphone, handphone, or handphone, sometimes shortened to simply a mobile, cell, or just phone, is a portable telephone that can make and receive calls over a radio frequency link while the user is moving within a telephone service area. In addition to telephony, digital mobile phones support a variety of other services, such as text messaging, MMS, email, Internet access, short-range wireless communications (infrared, Bluetooth), business applications, video games, and digital photography.

• Mobile phones offering only those capabilities are known as feature phones; mobile phones which offer greatly advanced computing capabilities are referred to as smartphones.

Thus, only statements B and C are correct. Therefore, Option 2 is the correct answer.

Extra browser window of commercials that open automatically on browsing web pages is called

- Spam

- Virus

- Phishing

- ✓ Pop-up

Solution

Web page:

• A web page is a simple document displayable by a browser.

• A web page can embed a variety of different types of resources such as style information – controlling a page’s look-and-feel.

• Online advertising, also known as online marketing, Internet advertising, digital advertising, or web advertising, is a form of marketing and advertising which uses the Internet to deliver promotional marketing messages to consumers.

Key-Points

• A pop-up is a graphical user interface (GUI) display area, usually a small window, that suddenly appears (“pops up”) in the foreground of the visual interface.

• A pop-up window is a type of window that opens without the user selecting “New Window” from a program’s File menu.

• Pop-up windows are often generated by websites that include pop-up advertisements.

• Ads that appear behind open windows are also called “pop-under” ads.

• Pop-ups open automatically while browsing web pages.

Therefore, the extra browser window of commercials that open automatically on browsing web pages is called Pop-up.

Additional Information

1. Spam

• Spam is any kind of unwanted, unsolicited digital communication, often an email, that gets sent out in bulk.

• Spam is a huge waste of time and resources. The Internet service providers (ISP) carry and store the data.

Spamming is the use of messaging systems to send an unsolicited message (spam) to large numbers of recipients for the purpose of commercial advertising, for the purpose of non-commercial proselytizing, or for any prohibited purpose (especially the fraudulent purpose of phishing).

2. Virus

• A computer virus is a type of malicious code or program written to alter the way a computer operates and is designed to spread from one computer to another.

• A computer virus is a malware attached to another program (such as a document), which can replicate and spread after an initial execution on a target system where human interaction is required.

• Many viruses are harmful and can destroy data, slow down system resources, and log keystrokes.

3. Phishing

• Phishing is a cybercrime in which a target or targets are contacted by email, telephone, or text message by someone posing as a legitimate institution to lure individuals into providing sensitive data such as personally identifiable information, banking, and credit card details and passwords.

• Phishing attacks are the practice of sending fraudulent communications that appear to come from a reputable source. It is usually done through email.

• The goal is to steal sensitive data like credit card and login information or to install malware on the victim’s machine.

Which of the following is correct with respect to the size of the storage units?

- Terabyte < Petabyte < Exabyte < Zettabyte

- Petabyte < Exabyte < Zettabyte Terabyte

- Exabyte < Zettabyte Terabyte < Petabyte

- Zettabyte < Exabyte Petabyte < Terabyte

Solution

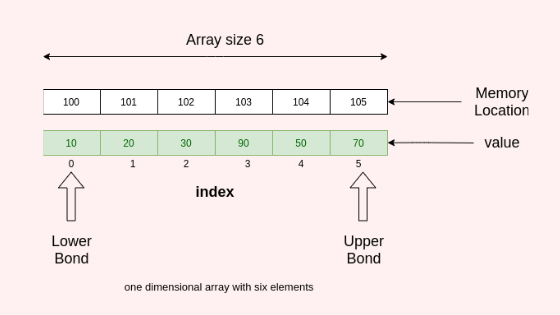

CPU consists of Memory or storage units that can store instructions, data and intermediate results. This unit supplies information to other units of the computer when needed. It is also known as an internal storage unit or the main memory or primary storage or Random Access Memory (RAM).

Key-Points

• Storage capacity is expressed in terms of Bytes. The data is represented as binary digits. (Os and1s).

• Hierarchy is as follows:

Bit< Nibble< Byte< KB< MB< GB< TB< PB< XB< ZB YB

where KB stands for kilobyte

- MB stands for megabyte

- GB stands for gigabyte

- TB stands for terabyte

- PB stands for petabyte

- XB stands for exabyte

- ZB stands for Zetabyte

- YB stands for yottabyte

Additional Information

- 4 bit = 1 Nibble

- 8 bit = 1 byte

- 1024 byte = 1 KB

- 1024 KB = 1MB

- 1024 MB = 1GB

- 1024 GB = 1TB

- 1024 TB = 1PB

- 1024 PB = 1XB

- 1024 XB = 1ZB

- 1024 ZB = 1YB

The correct solution is “Terabyte Petabyte< Exabyte< Zettabyte”

Which of the following is true about the wireless networking?

(A) It is easier to expand the network

(B) Data is less secured than wired system

(C) Reliability is more than wired

(D) Faster than wired network

Choose the correct answer from the options given below:

- (A), (B), (D) only

- (A), (B) only

- (B) (C) only

- (A), (B) (C) only

Solution

Wireless networking is a method by which homes, telecommunications networks, and business installations avoid the costly process of introducing cables into a building, or as a connection between various equipment locations. The main purpose of using a wireless network is its flexibility, roaming, low cost, and high standard.

Types of Wireless Networks.

- 1. WLAN (Wireless Local Area Network)

- 2. WWAN (Wireless Wide Area Network)

- 3. WMAN (Wireless Metropolitan Area Network)

- 4. WPAN (Wireless Personal Area Network)

- 5. Ad-hoc Network.

- 6. Hybrid Network.

Wi-Fi is a wireless networking technology that allows devices such as computers (laptops and desktops), mobile devices (smartphones and wearables), and other equipment (printers and video cameras) to interface with the Internet. Internet connectivity occurs through a wireless router.

Key-Points

• In general, wireless networks are less secure than wired networks since the communication signals are transmitted through the air. Because the connection travels via radio wave, it can easily be intercepted if the proper encryption technologies (WEP, WPA2) are not in place.

• Wired networks are generally much faster than wireless networks. This is mainly because a separate cable is used to connect each device to the network with each cable transmitting data at the same speed. A wired network is also faster since it never is weighed down by unexpected or unnecessary traffic.

• Wireless networks enable multiple devices to use the same internet connection remotely, as well as share files and other resources. There are also disadvantages to wireless networks, however, especially when you compare them with wired networks, which generally maintain a faster internet speed and are more secure.

Additional Information

Benefits of Wireless Network

• Increased Mobility: Wireless networks allow mobile users to access real-time information so they can roam around your company’s space without getting disconnected from the network. This increases teamwork and productivity company-wide that is not possible with traditional networks.

Installation Speed and Simplicity: Installing a wireless network system reduces cables, which are cumbersome to set up and can impose a safety risk, should employees trip on them. It can also be installed quickly and easily when compared to a traditional network.

• Wider Reach of the Network: The wireless network can be extended to places in your organization that are not accessible for wires and cables.

• More Flexibility: Should your network change in the future, you can easily update the wireless network to meet new configurations.

• Reduced Cost of Ownership over Time: Wireless networking may carry a slightly higher initial investment, but the overall expenses over time are lower. It also may have a longer lifecycle than a traditionally connected network.

• Increased Scalability: Wireless systems can be specifically configured to meet the needs of specific applications. These can be easily changed and scaled depending on your organization’s needs.

Therefore Option 2 is the correct answer.

Given below are two statements:

Statement I: Use of programmed instructional material as supplement has the potential to optimise learning outcomes.

Statement II: ICT, if used, judiciously will render teaching-learning systems effective and efficient.

In the light of the above statements, choose the most appropriate answer from the options given below:

- ✓ Both Statement I and Statement Il are correct

- Both Statement and Statement Il are incorrect

- Statement is correct but Statement Il is incorrect

- Statement is incorrect but Statement Il is correct

Solution

Important Point

Programmed Instructional material :

• It is a technique of self-instruction, one can learn by himself or herself

• The instruction could be computer-assisted or printed format

• The main focus is to develop materials that maximize learning foster understanding and reinforcement to the learner.

• Programmed Instructional material uses active responding and immediate feedback as important principles for learning

• The significant characteristics of Programmed Instructional material are

o Active responding

o Immediate feedback

o Individual difference is taken care

o Continuous evaluation

o Immediate confirmation

o Self-pacing

Thus the use of programmed instructional material as a supplement has the potential to optimize learning outcomes.

Information and Communication Technology (ICT) in Teaching:

• It is an integration of Information Technology and telecommunication system using computers and necessary devices.

. It can use wireless and wired media.

• It covers any product that will store, retrieve, manipulate, transmit, or receive information electronically in a digital form

• ICT has its use in education, banking, health, e-commerce services, social networking, online research, etc.

• It has a broader and comprehensive use of devices.

• ICT enables learning through the use of computers, overhead projectors, videos, documentaries, whiteboards, etc.

• It is an infrastructure to enable modern computing.

• Information and Communication Technology (ICT) in teaching comprises Online learning, Learning through Mobile Application, and Web-based learning

• It is a modern teaching technique

• It has the potential to optimize learning outcomes

Therefore, Both Statement I and Statement Il are correct.

Which of the following statements are true?

(A) An algorithm may produce no output

(B) An algorithm expressed in a programming language is called a computer program.

(C) An algorithm is expressed in a graphical form known as a flowchart

(D) An algorithm can have an infinite sequence of instructions.

Choose the correct answer from the options given below:

- (A) and (B) only

- (A), (B) and (D) only

- (C) and (D) only

- (B) and (C) only

Solution

The origin of the term Algorithm is traced to Persian astronomer and mathematician, Abu Abdullah Muhammad ibn Musa Al-Khwarizmi (c. 850 AD) as the Latin translation of AlKhwarizmi was called ‘Algorithmi’.

Key-Points

• A programmer writes a program to instruct the computer to do certain tasks as desired.

• The computer then follows the steps written in the program code.

• Therefore, the programmer first prepares a roadmap of the program to be written, before actually writing the code.

• Without a roadmap, the programmer may not be able to clearly visualize the instructions to be written and may end up developing a program that may not work as expected.

• Such a roadmap is nothing but the algorithm which is the building block of a computer program.

• Hence, it is clear that we need to follow a sequence of steps to accomplish the task.

• Such a finite sequence of steps required to get the desired output is called an algorithm. It will lead to the desired result in a finite amount of time if followed correctly.

• The algorithm has a definite beginning and a definite end and consists of a finite number of steps.

• In mathematics and computer science, an algorithm is a finite sequence of well-defined, computer-implementable instructions, typically to solve a class of problems or to perform a computation or produce the desired output.

• Algorithms are always unambiguous and are used as specifications for performing calculations, data processing, automated reasoning, and other tasks.

• We can express an algorithm in many ways, including natural language, flow charts, pseudocode, and of course, actual programming languages.

• An algorithm expressed in a programming language is called a computer program.

• An algorithm is expressed in a graphical form known as a flowchart.

• For example, searching using a search engine, sending a message, finding a word in a document, booking a taxi through an app, performing online banking, playing computer games, all are based on algorithms.

Thus, option 4 is the correct answer.

What is the full form of the abbreviation ISP?

- International Software Product

- Internet Service Provider

- Internet Server Product

- Internet Software Provider

Solution

Key-Points

1. An Internet service provider (ISP), a company that provides Internet connections and services to individuals and organizations.

2. In addition to providing access to the Internet, ISPs may also provide software packages (such as browsers), e-mail accounts, and a personal Web site or home page.

3. ISPs can host Web sites for businesses and can also build the Web sites themselves.

4. ISPs are all connected to each other through network access points, public network facilities on the Internet backbone.

The full form of the abbreviation ISP is Internet Service Provider.

A firewall is a software tool that protects

(A) Server

(B) Network

(C) Fire

(D) Individual Computer

Choose the correct answer from the options given below:

- (A), (C) only

- (B) (C) only

- (A), (B) (C) only

- (A)(B) (D) only

Solution

Firewall:

1. A firewall is a system designed to prevent unauthorized access to or from a private network.

2. You can implement a firewall in either hardware or software form, or a combination of both.

3. Firewalls prevent unauthorized Internet users from accessing private networks connected to the internet, especially intranets.

4. All messages entering or leaving the intranet (the local network to which you are connected) must pass through the firewall, which examines each message and blocks those that do not meet the specified security criteria.

5. In protecting private information, a firewall is considered the first line of defense; it cannot, however, be considered the only such line.

6. Firewalls are generally designed to protect network traffic and connections, and therefore do not attempt to authenticate individual users when determining who can access a particular computer or network.

Thus we can conclude by saying a firewall is a software tool that protects servers, networks, and individual computers.

A type of memory that holds the computer startup routine is

- Cache

- RAM

- DRAM

- ROM

Solution

Key-Points

• ROM stands for Read-Only Memory.

• ROM is a storage device that is used with computers and other electronic devices.

• Data stored in ROM may only be read.

• ROM is used for firmware updates, which means It contains the basic instructions for what needs to happen when a computer is powered on.

• Firmware is also known as BIOS, or basic input/output system.

• ROM is non-volatile storage, which means the information is maintained even if the component loses power.

• ROM is located on a BIOS chip which is plugged into the motherboard.

• ROM plays a critical part in booting up or starting up, your computer.

Important Point

Random Access Memory (RAM)

• This memory allows writing as well as reading of data, unlike ROM which does not allow writing of data on to it.

It is volatile storage because the contents of RAM are lost when the power (computer) is turned off.

• If you want to store the data for later use, you have to transfer all the contents to a secondary storage device.

Dynamic random access memory (DRAM)

It is a type of semiconductor memory that is typically used for the data or program code needed by a computer processor to function.

DRAM is a common type of random access memory (RAM) that is used in personal computers (PCs) workstations and servers.

Cache

Cache primarily refers to a thing that is hidden or stored somewhere, or to the place where it is hidden.

It has recently taken on another common meaning, “short-term computer memory where information is stored for easy retrieval.”

The correct answer is Read-Only Memory (ROM).